When you hire an elite Red Team, you start with an implicit signal of their talent. You review their resumes, their standing within the research community, certifications with trusted vendors like OffSec and CREST. You assume they can navigate your specific tech stack and pivot through your environment. But in offensive security, assumptions are liabilities.

The real shift happens the moment they submit their first vulnerability. Now, you have an explicit signal. A submitted report provides an undeniable data point: you know how their mind works, which tech stacks and software versions they can exploit, and the specific tactics—from SQL injection (SQLi) to complex business logic manipulation—they used to breach your perimeter.

As Red Teams continue to grow their AI agent counterparts, this same standard of implicit versus explicit signal should remain the baseline for benchmarking. However, current evaluation frameworks weigh theoretical self-made lab scores too heavily and minimize real-world explicit signals.

How to Validate AI Pentesting Results Beyond Lab Scores

For AI agents, the implicit signal is the data they’re trained on. We’re told a model has been trained on massive repositories of code and security research, so at the very least, it has knowledge hard-coded into its logic. On paper, these agents should be elite. However, until an agent is tested against a real-world production target, its capability remains theoretical.

The gap between these signals is most visible in environmental nuances. Consider a standard SQLi vulnerability. In a containerized lab environment, an AI agent succeeds quickly because the environment is fragile—it might return 200 for every valid request, providing a clear path for enumeration. The AI identifies the injection point and dumps the database because the implicit training matches the predictable laboratory explicit state.

However, in a real-world enterprise environment, that same AI often fails. It might encounter a Web Application Firewall (WAF) that uses rate-limiting or signature-based detection to block rapid and repetitive tool calls. While a human tester would sense the WAF’s intervention and pivot to time-delays or use encoding to bypass the filter, the AI often falls into an infinite execution loop, repeating failed payloads until it suffers from context drift and forgets the initial state of the target.

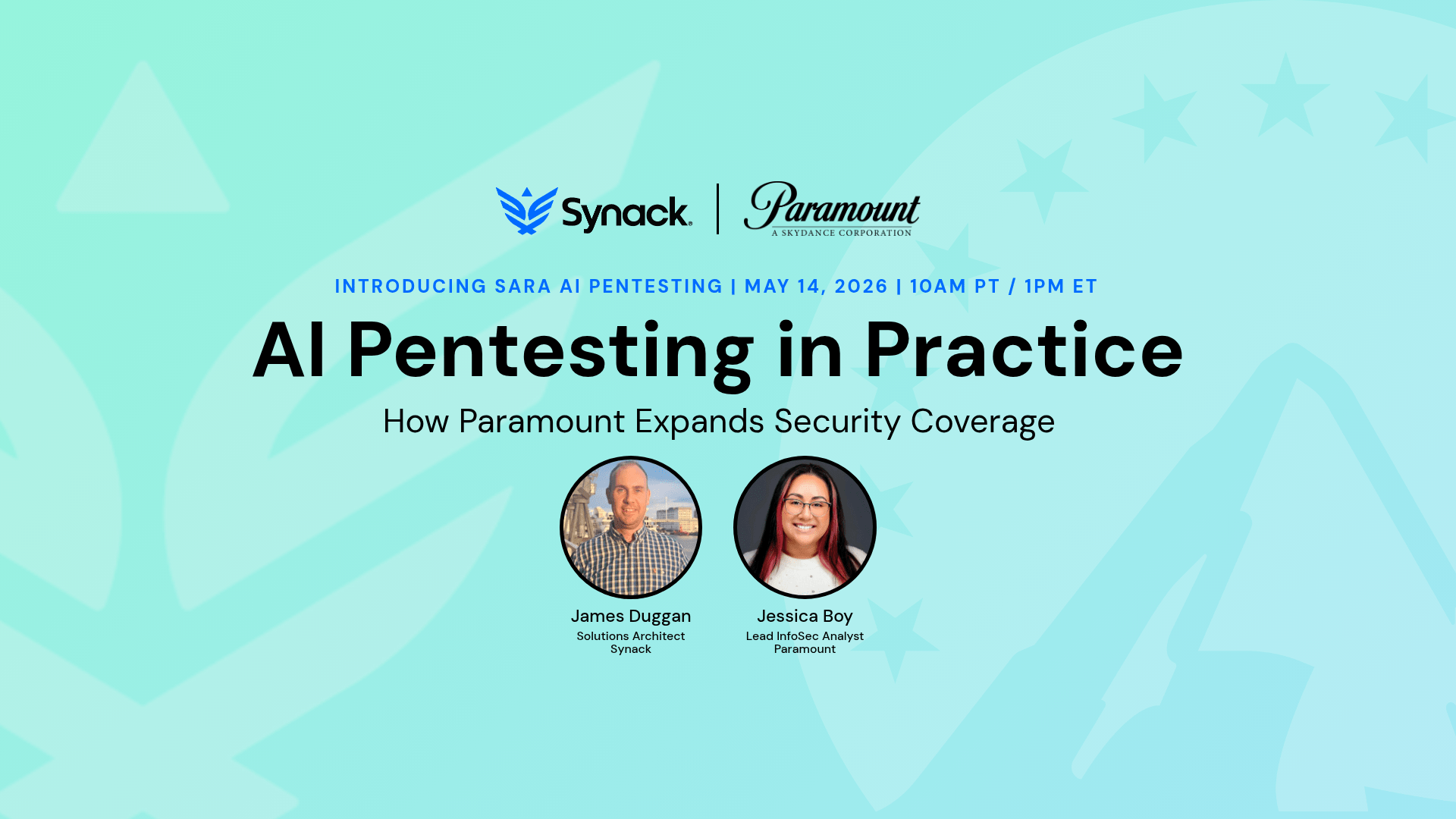

How Synack Stack-Ranks Red Team Researchers

To better evaluate AI agents, we need a benchmarking system that more closely aligns with how we currently stack-rank human Red Team researchers. For instance, our pentesting approach uses a leaderboard to track the impact and value our Synack Red Team (SRT) members deliver based on the following:

- Point economy: Researchers earn points derived from the high-level impact and customer value of what work was done and how it was done.

- Vulnerability criticality: Higher CVSS scores yield higher explicit signals, incentivizing the discovery of existential business threats over low-hanging fruit.

- Quality and reliability: Points are adjusted based on a signal-to-noise logic, forcing researchers to prioritize accuracy over raw submission volume.

- Patchability: This is measured by the customer’s speed to patch and the reduction of unaddressed vulnerabilities, aligned with NIST enterprise patch management standards.

- Trust: Customers must be able to trust researchers to stay in scope, take downstream consequences into consideration (showing restraint, self throttling etc.), and ask for permission when necessary to support a rigorous testing experience.

- Reliability: Researchers must always be ready to assist.

- Mastery / Repeatability: SRT members should be able to recreate success across different customers despite differential nuances in their infrastructure.

- Sustained engagement: To ensure the signal remains current, Synack uses a rolling 365-day window for reputation calculation. If a researcher stops producing high-quality explicit signals, their level naturally decreases.

What This Means for Your Security Team

The practical consequence of relying on lab-based benchmarks is one most security teams already feel: alert fatigue. When an AI agent is optimized for volume over accuracy, your team inherits the noise, triaging false positives instead of remediating real threats.

The signal-to-noise problem is especially acute in AI pentesting because the stakes are higher than a standard scanner. An AI agent operating in a production environment can trigger WAFs, cause unintended downtime, or exhaust rate limits—creating incidents rather than preventing them.

Before deploying an AI pentesting agent at scale, security teams should ask three questions: Does it prioritize high-CVSS findings over low-hanging fruit? Can it demonstrate repeatability across different infrastructure? And when it hits a defense, does it adapt or loop?

What Security Teams Need Before Trusting AI Agents in Production

Trust is the overarching factor that determines whether an AI agent moves into production-ready environments. Currently, neither humans nor agents are perfect, but as you invest time into a human researcher, you create a feedback loop. They learn from their mistakes, they adapt to your specific no-go zones, and over time, the risk of a liability event decreases.

For an AI agent to reach parity, it needs to be trusted in the same way. It must demonstrate that it can learn from its failed payloads and infinite loops just as effectively as a human researcher. We should be able to know for sure if an agent triggers a WAF and crashes a service today, that it will have the contextual memory to avoid that same mistake tomorrow.

The arena is ready; now the agents must show they can stay in scope and deliver.

Key Takeaways

- Shift to explicit signals: Move evaluation from “what the AI knows” (implicit) to “what the AI can exploit” (explicit).

- Overcoming context drift: Real-world reliability requires AI to handle environmental nuances like WAFs without infinite loops.

- Standardized benchmarking: AI agents should be measured against the same CVSS and signal-to-noise metrics as elite human Red Teams.

- Trust as a variable: To gain trust, reliable AI must demonstrate the ability to learn from mistakes and exercise operational restraint.

Frequently Asked Questions

Why should I care about explicit signals if an AI vendor already provides high benchmark scores from their own testing labs?

Lab scores represent implicit signals. This is what the AI should be able to do based on its training data. In a controlled environment, variables are limited. An explicit signal is only generated when the AI operates against a real-world target. Security teams need to know if an AI agent can handle the noise and intentional counter-measures of a production environment, including WAFs, rate-limiting, and custom business logic, where theoretical knowledge often fails.

We already use automated scanners; how is an AI agent benchmarked differently?

Standard scanners are often high-volume and high-noise. To benchmark an AI agent like an elite Red Teamer, we look at quality and reliability. This means using signal-to-noise logic: we reward the agent for accuracy and high-impact discoveries (using CVSS scores) rather than the raw volume of alerts. Similarly, we want to see the AI agent prioritize existential threats over low-hanging fruit.

What is the biggest technical hurdle preventing AI agents from being production-ready?

In a lab, an AI agent might succeed because the environment is fragile and predictable. In the real world, security controls and creative human ingenuity can cause it to fall into an infinite execution loop—repeating the same failed payload until it loses track of its original goal. In a real-world setting, the AI agent must demonstrate it can sense a defense and pivot its tactics just like a human.

How do we measure if an AI agent is actually learning or just getting lucky?

We apply the principle of mastery and repeatability. We look for the AI agent’s ability to recreate success across multiple similar deployments in the same organization, as well as different infrastructures. Furthermore, trust is measured by whether it learns from a mistake. If an AI agent triggers a WAF and crashes a service today, it must understand the non-technical business impact to its actions and have the contextual memory to avoid that same mistake tomorrow.

How can I justify the trust factor of an AI agent to my Board of Directors?

Trust is benchmarked through operational restraint. Just as we rank and recognize human researchers at Synack, we evaluate AI agents on their ability to stay within scope, self-throttle to avoid downtime, and ask for permission when a move might have downstream consequences. An AI agent is only elite if it can provide a rigorous testing experience without becoming a liability to the business. If you’re not sure where your current AI pentesting program stands, assess your AI pentesting readiness with the Glasswing Assessment.